For the last few days, I’ve started to put together my own virtual modular system, my very own instrument, something that feels like I crafted it rather than a one-off patch, something I can learn and perform live.

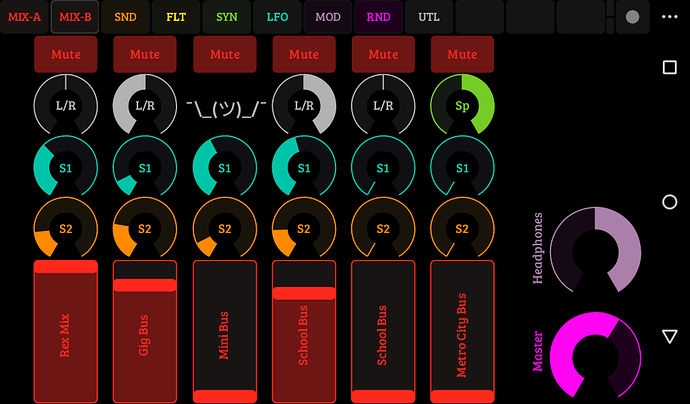

It seems most people make new songs with VCV starting with an empty rack they fill with what they need and nothing more, while people who use modular hardware have setups that evolve slowly, limited by the money they can throw at their hobby. I’m not big into formalism and genre essentialism so I think any approach that yields fun results if fine, really. And since I came to VCV from Propellerheads’ Reason, the first approach makes a lot of sense to me. I’m young enough that software is the real thing to me. Software is what I’d rather use. I’m not using VCV as a stepping stone to buying €18954 of inferior gear with patchcable my dog would chew up and that lacks total recall. But I think it’s also fun to craft your own modular system, make difficult choices to put up a system that’s versatile but small enough to wrangle, to learn it in-depth, to know it intimately, to push it to its limits… So I decided to do that in the virtual world, and to also map it to TouchOSC so I can can control it from phones and tablets, in addition to my MIDI controllers.

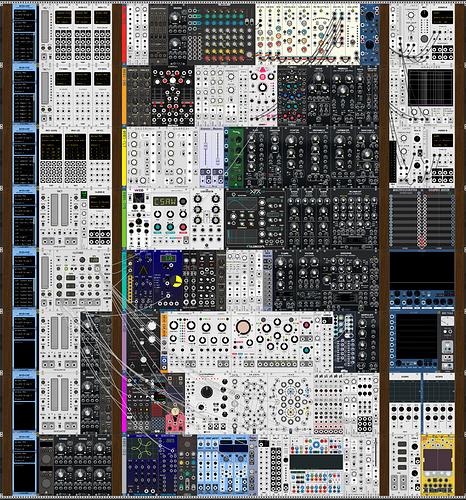

So, it’s still a work in progress, but here’s my system! It’s limited by my computer specs (takes 50% CPU when idle) and the complexity to navigate it, but hopefully versatile enough to get a lot out of it. It’s highly focused on randomization and on slow evolving techno jams. No serious sequencer, if I want one I’ll grab other software rather than do it in the rack

Here’s a pic of it:

(I don’t trust this forum to let you see the full size pic, so here’s an alternative at 100% zoom: https://aria.dog/upload/2019/10/system.jpg )

It’s not set in stone yet, or ever, so feel free to get indignant I didn’t add a module you consider essential!

And here’s two random TouchOSC pages, so I can perform it from tablets:

I haven’t mapped it all, but I started jamming with it, and I like the results! Here’s a quick excerpt of my experiments with it yesterday, improvised live:

https://soundcloud.com/ariasalvatrice/aria-vcv-system-prototype-wip14b

As I have two sound cards, I’ve also rigged up a headphone mix, so I can preview things before I cue them up, hopefully I can use that to make smooth sounding sets. Excited to finish mapping it to touch controller, learn how to operate it in depth, and try to get fun performances out of it!