I wanted to discuss this for a while, but it was hard to do it without it looking like stealth promo for something unrelated to VCV. Since I’ve been around for a while, I think it’s time to talk about this.

Last summer, a few weeks before I started getting into VCV, I made with Reason a song I was really happy with.

My project at the time was to make a series of theme songs, in very disparate styles, for internet friends (without their creative input - only their opt-in consent). I used it as an opportunity to experiment with a variety of ideas and processes.

One of the most popular songs for this project was written in a very modular way: as a series of arpeggiator rules, with various pattern durations and intensities, using a variety of scales.

Let’s listen to the results first:

It resulted into a song with unstable tonality and no clear time signature, yet an easily followed melody with clear variations of intensity. It’s a bit hard to explain, and I know I won’t explain it well, but hopefully still well enough to understand the big idea.

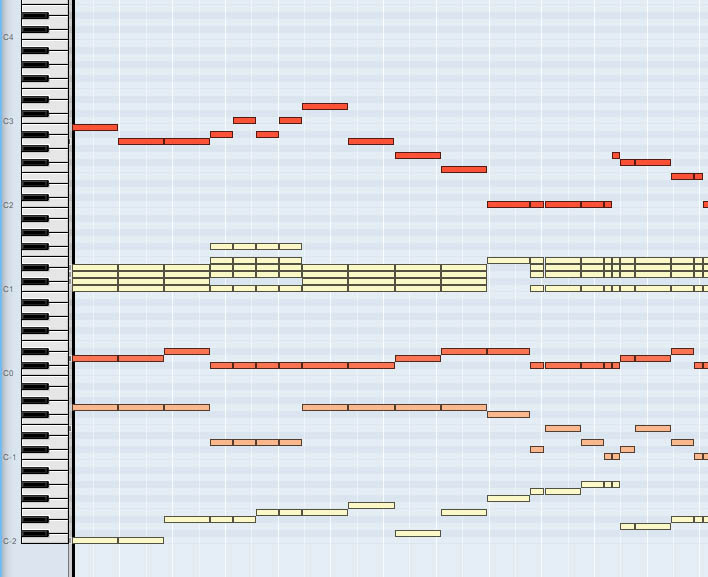

The entire song is driven by a single MIDI track:

The lowest octave picks the scale from 8 options. The second octave selects the pattern (the length of the note block matches the length of the pattern). The third octave selects the intensity. The fourth octave unmutes instruments to play. The notes above C2 are used as the root of the chord that is passed to the arpeggiators powering the song.

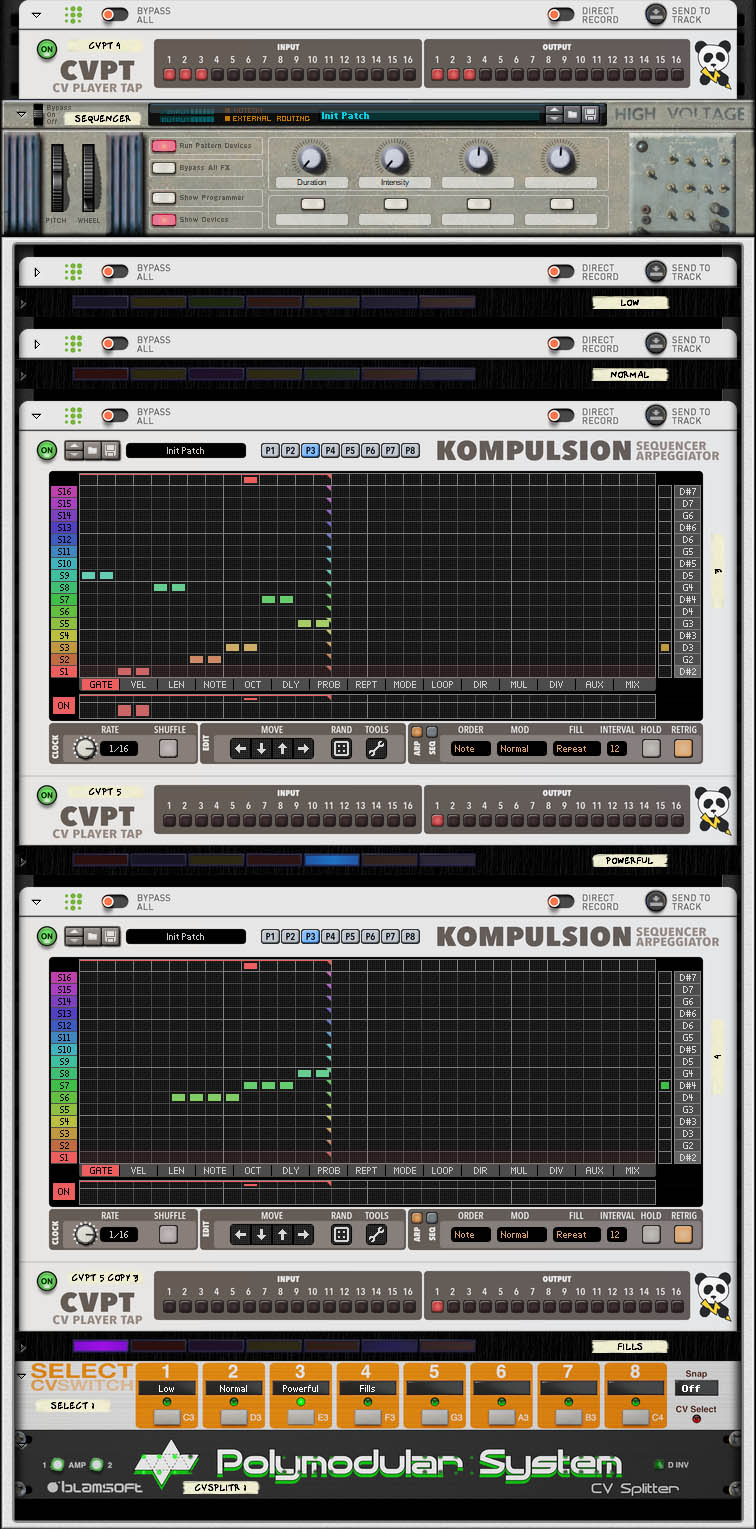

Most instruments receive the notes to play from one of those 32 patterns:

There are 4 * 8 patterns in total on 4 Kompulsion devices (only 2 depicted in the screencap above), each with a different melody, operating on abstract chord degrees rather than on known pitches. Each pattern is given 3 notes of a chord to use, and it can play them in octaves. The pattern variations are:

- 4 Intensities - 1 Kompulsion device per

- Low intensity (few notes)

- Normal intensity (more notes)

- High intensity (very busy)

- Fills (bridges and segues)

- 8 durations (in 16th notes) - 1 Kompulsion pattern per

- 5

- 9

- 14

- 16

- 22

- 24

- 26

- 28

Some instruments, such as the bass or drums, also had their own built-in patterns, and sometimes used other sequencing devices.

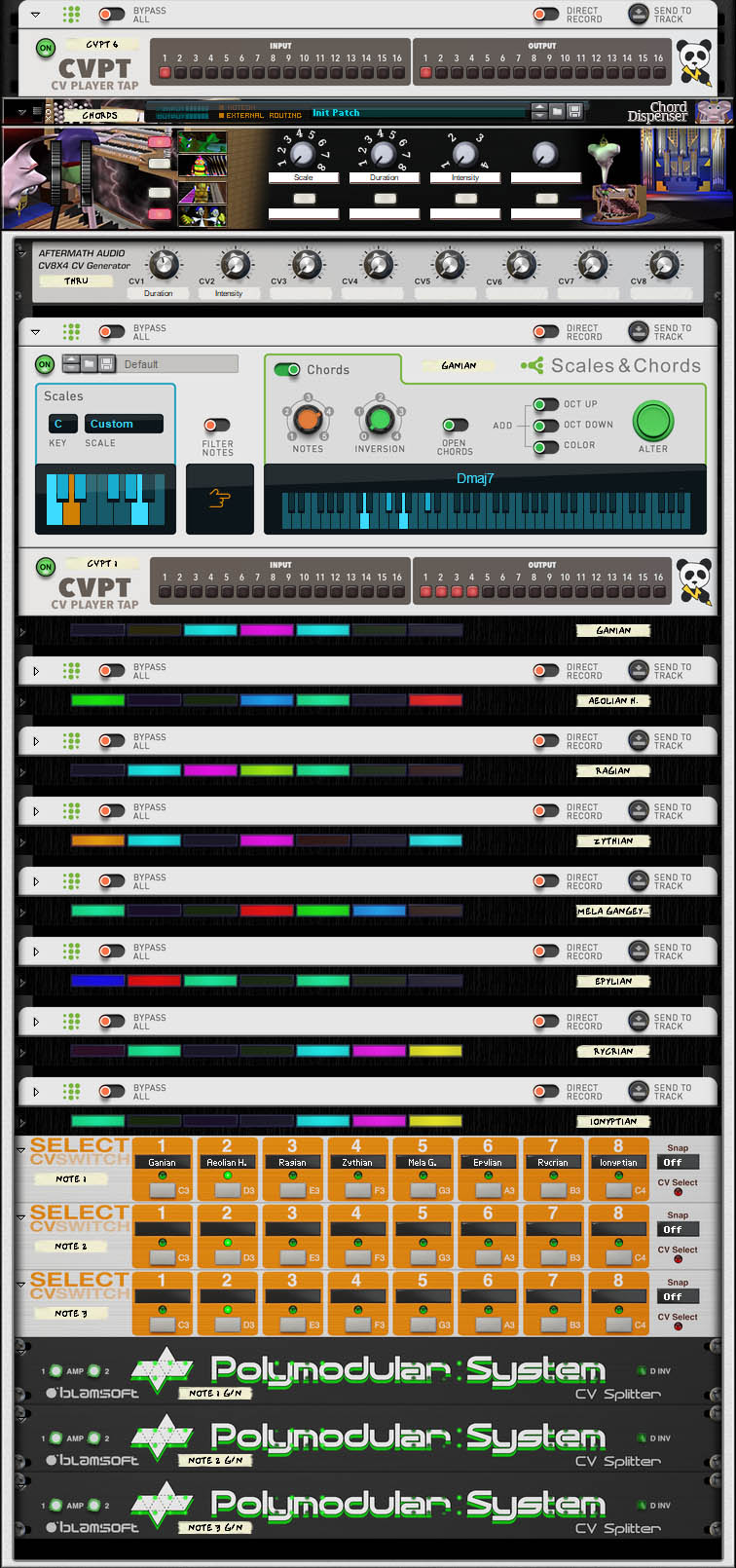

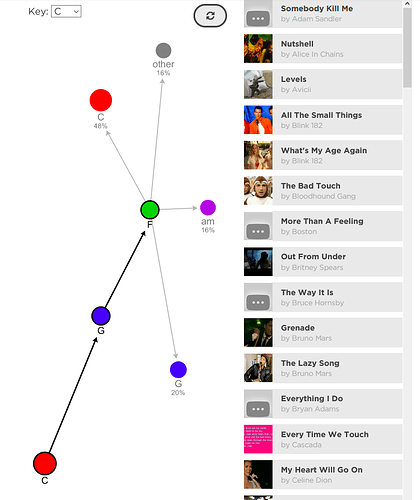

The chords used are provided by my chords dispenser & scale selector - an elaborate piece of Reason patching. I wrote in greater detail about the Reason-related parts in the Reason forums, but it will make no sense if you don’t use Reason. Or even if you use it. I barely understand why it works, honestly. The quick version: it generates chords that work in a variety of related exotic scales rooted in C, and sounds just dissonant enough to my tastes.

The actual songwriting process was not very deliberate. As it’s difficult to remember what every keyswitch does, and how 32 different song patterns sound like, I had to just try out things, hear if they work, and change the intensity, scale, or note if they don’t, then arrange the bits as to make it resemble a song.

I’m happy with the process, and very happy with the resulting song (and the subject of the song was also happy with her theme song). But ultimately, Reason remains a normal DAW with modular aspects: it’s difficult to take the idea further, or to play this song live.

One sequenced performance was frozen in time, and most of the patterns I wrote never made it into the song.

With a better method of control than a sequencer, I am certain it could be performed live - and maybe even conducted live by an untrained performer, conducting the performance at the macro level, allowing you to turn the piece into a participatory art kinda thing, like a player piano you can control at a manageable level of granularity.

My question is, how would you remake this song in VCV, but it’s a difficult question: it would be more useful to pick an individual part of the problem, and discuss how you would implement it. Here’s a non-exhaustive lists of problems we can think about - and each question definitely has more than one good answer:

- How to generate interesting scales & chords to use in such a workflow? How to require less music theory knowledge from the performer to keep the dissonance under control, without constraining them into a very safe set of chords?

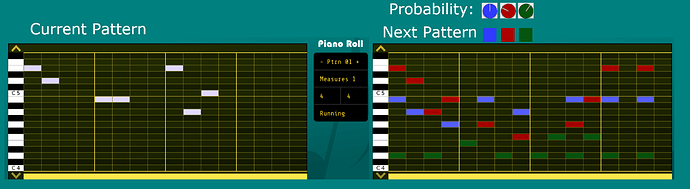

- How to sequence operations on chord degrees/scale degrees rather than known pitches, arpeggiator-style? How to preview them easily when many variations are possible?

- How to create a simple system to select a pattern from multiple categories of variations (intensity, duration)? How to dispatch them to various voices?

- How to sync up sequencers when patterns can have varying note lengths?

- How to queue up patterns? How to queue up more than one pattern in advance?

- How to easily conduct this, with a mouse or MIDI controller? How to make it manageable when playing very short patterns?

- Do any techniques, not directly relevant to this process but still similar, come to mind?

Paid VCV modules are not off-limits, but please keep answers constrained to a VCV Rack + generic MIDI controller setup: avoid third party software that can’t be kept all in the box.

If the discussion generates any interest and people post their ideas, I’ll follow up with some of my own, but for now I’d like to hear how you would patch parts of it, and learn about the capabilities of modules I had not explored in sufficient depth. And don’t hesitate to post techniques you already saw me use in a video - documenting them might benefit others reading the thread.

I hope this thread makes sense at all! Please don’t feel you have to understand how it works in detail to reply, it’s hard to explain and I’m explaining it poorly, using weird off-label patching tricks in software you probably don’t use.

A lot of the focus of my slowly growing module collection is to allow exploring these kinds of processes - and make them work live. QQQQ in particular is a building block for future modules in my system. Hopefully the discussion will also help me think about the next pieces for my module collection.