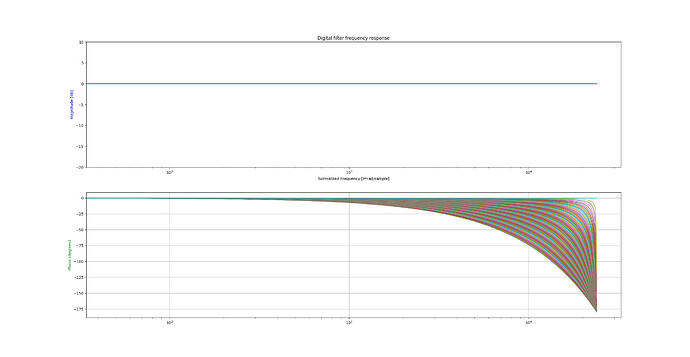

When working with fractional delay lines, all pass interpolation seems ideal because, unlike linear or hermite interpolation, it has no magnitude loss and only phase distortion. In my experience recently I have had much difficulty implementing all pass interpolation without clicks and pops on sustained sounds.

All Pass Interpolation Magnitude and Phase:

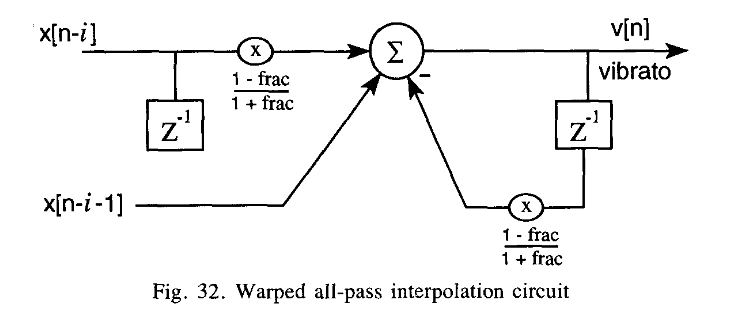

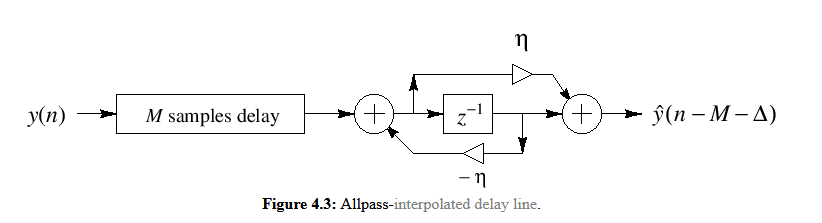

Dattorro discusses the use of all pass interpolation in-detail in his “Effect Design Part 2”. I won’t go into all the details, but more or less he proposes the following block diagram as a good option for an all pass interpolator:

This is more or less the same as the all pass interpolation method proposed on JOS’s CCRMA site.

I believe that, because all pass interpolation is recursive, it is very sensitive to changes in the coefficient “η” and large changes result in the clicks and pops. By keeping the coefficient value as close to zero as possible, we’re able to reduce the transient effect… aka limiting the fractional delay, frac, to 0.618 to 1.618 leads to the coefficient η having a value of -0.236 to 0.236. In practice, this works OK. The clicks and pops are definitely reduced using this exact method (see code below), but still evident on sustained sounds.

//Table size must be of size 2^N, where N is some integer

inline float InterpolateAllPass(float* table, float index, int32_t* size,

float* delayBlock) {

MAKE_INTEGRAL_FRACTIONAL(index)

float xm = table[(index_integral-1)&(*size-1)];

float xmp1 = table[(index_integral)&(*size-1)];

if(index_fractional < 0.618f) {

index_fractional+=1.0f;

}

float f = (1-index_fractional)/(1+index_fractional);

float v = xm + f*(xmp1 - *delayBlock);

*delayBlock = v;

return v;

}

Has anyone here successfully implemented an all pass interpolator that is artifact free? Upsampling by a factor of two or more reduces the artifacts greatly, but I can’t take this approach due to the CPU cost.