So… I couldn’t get this thing out of my head and came up with a fairly simple solution to detect a grid.

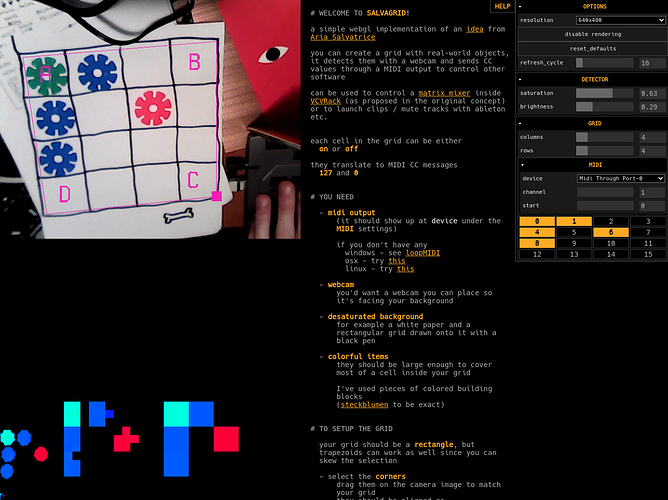

If is just a rectangular grid, there’s no need for any advanced blob detection. All that’s needed is a 3D renderer that can render a textured mesh based on UV coordinates and vertices and then read pixels from the result.

Also a fragment shader to filter/enhance “active” pixels from the render. I wrote one that makes everything that is only saturated below a threshold full black and anything that is saturated enough gets maxed out in saturation.

After the UV coordinates gets selected on the webcam’s video plane, I render a mesh from them, scaled so that every cell in the grid gets exactly 1 pixel ( the UV coordinates can be skewed but the rendered mesh’s vertices are forming a nice rectangle )

Then it is possible to simply read pixels from this rendered image to determine if the cell is active or not.

The 1 pixel render was flickering a bit, when the objects weren’t in the exact center, so I’ve added an secondary “oversampled” render as well that has 3x3 pixels for each cell.

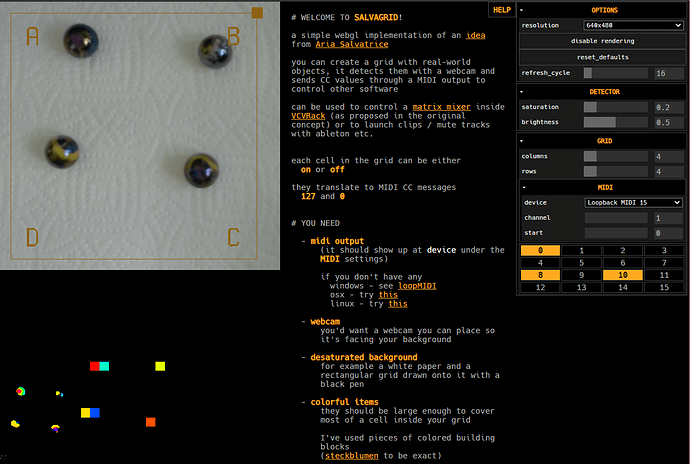

That being said, I didn’t have marbles at hand, so I used some building toys - they are quite saturated and with different hues (hues could mean different values too!) - with a grid drawn by hand on a piece of paper.

Maybe marbles need to get enhanced in terms of contrast only…

^ here, in this corner are the actual 1 pixel sized renders, they might not be visible

(above them the regular, 3x3 sampled and the 1x1 sampled renders scaled up)

Even though this is running in the browser, it seems to work fine in terms of performance (I’ve only tested with small grids though and have a good videocard).

Might be useful as a starting point for you if you go the open-cv/native route.

the sources on gitlab

(written in coffeescript, using webmidi.js, dat.gui.js and THREE.js for rendering)

One of the problems that came up (since I use a top-down camera) is how to separate pixels coming from the hand vs. the cells. My current setup tweaked with the right values works so that wherever my hands are, the cells beneath them get deactivated (or activated based on the parameters of the shader), maybe it would be better to somehow detect the hand (with chroma-keying from example), and only output pixels that are not “hand colored”. Maybe it would be beneficial to write a custom minFilter for the resampling too.

Also for some reason the webmidi.js library doesn’t send cc values over controller addresses larger than 119, so it can be a bit tricky to setup in 16x16 mode. I made it so it overflows the grid onto the next midi channel if it is larger than 119, but still (maybe it will be the cleanest with four 8x8 grids once multiple grids are supported)

Had an idea while making this that I might explore now… It is about creating circle shapes out of paper, colored in a rainbow cycle (like a hue ring in color pickers) then you pin these circles onto a table or something and by only reading 1 pixel (for example from around the 12 o’clock position on the ring) you can convert the picked hue value into cc easily, so you get these funky paper knobs that you can turn like miniature vinyl decks!